Tinybox & tinygrad (2026): Local FLOPS, Red/Green v2, and the Alpha Tradeoff

TLDR

SignalStack Tech Report · March 21, 2026 · AI Infrastructure / Hardware / Frameworks

Why this is on SignalStack: we cover CapEx versus cloud elasticity when it changes who owns FLOPS, data, and compiler contracts—not “GPU shopping” alone.

Tinycorp is shipping Tinybox—deep-learning workstations built around the deliberately minimal tinygrad framework—and positioning them as a performance-per-dollar alternative to six-figure enterprise racks.

As of March 2026, the story is no longer “buy a GPU”; it is about owning the stack: hardware tuned for a framework that compiles custom kernels, fuses ops aggressively, and aims to beat PyTorch on selected research workloads before tinygrad leaves alpha (internal target: Q2 2026).

Editorial — The race for local compute

By SignalStack Editorial Team · March 2026

The race for local compute is no longer just about owning a GPU—it is about owning the stack. Tinycorp has leaned into that shift with an updated Tinybox lineup that pairs commodity-class boxes with software that refuses to hide complexity behind thousands of fused-but-opaque API layers.

George Hotz’s team is not selling “a Linux server with drivers.” They are selling offline sovereignty: machines marketed for “the Singularity” moment—training and inference without mandatory cloud SaaS or API keys, in a freestanding or 12U rack form factor, with values and data staying in your office.

That narrative is as much philosophy as benchmark: simplicity as a performance strategy, not a marketing slogan.

What happened

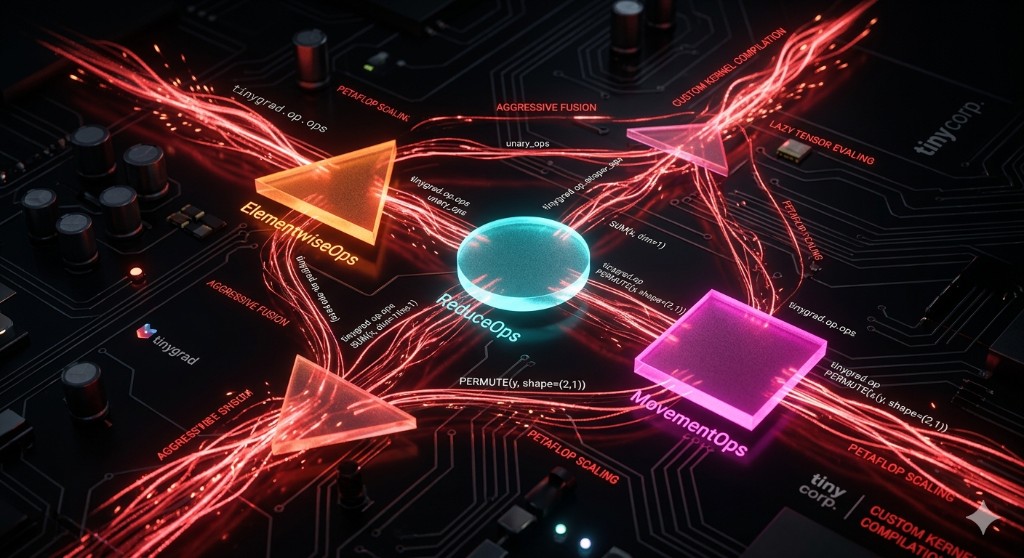

tinygrad reduces the surface area of a framework to three conceptual operation families—ElementwiseOps, ReduceOps, and MovementOps—so the compiler can reason about shapes, fuse lazily evaluated graphs, and target hardware with fewer surprises.

On the hardware side, Tinybox is positioned using MLPerf Training-style narratives: compare against systems that cost multiples more, and publish TFLOPS, RAM, and GPU topology transparently. The current line centers on Red v2 and Green v2 (shipping), with a long-horizon Exabox program pointing at exa-scale FLOPS and a 2027 target window—still early, still partly roadmap.

Commercially, Tinycorp keeps the SKUs non-customizable (to protect unit economics), uses wire transfer as the payment rail, and ships from San Diego with roughly week-scale lead times after payment—constraints that read as “we are optimizing for throughput and QA, not bespoke integrators.”

Why it matters

If petaflop-class training stays locked behind cloud contracts and opaque pricing, only the largest labs can iterate on frontier models. Tinybox’s bet is commoditizing FLOPS the same way prior eras commoditized CPUs: sell boxes that amortize over research cycles instead of burning token budgets.

For startups and academic labs, that is an exit ramp from the NVIDIA tax in the literal sense: CapEx-heavy, but predictable—and fully under your policy and security model.

The counterweight is maturity: tinygrad remains alpha. Production users exist (notably adjacent work like openpilot’s ecosystem), but the framework’s contract is closer to “move fast, expect sharp edges” than “enterprise SLA.”

Key details at a glance — 2026 hardware lineup (reference)

Figures below compile public positioning; specs and pricing move. Treat this as a snapshot for Q1 2026, not a quote sheet.

| Feature | tinybox Red v2 | tinybox Green v2 | Exabox (target ~2027) |

|---|---|---|---|

| Indicative price | $12,000 | $65,000 | ~$10,000,000 (public commentary) |

| GPU setup (as positioned) | 4x AMD 9070 XT-class | 4x RTX PRO 6000 (Blackwell generation) | 720x RDNA5-class (preliminary roadmap; unverified) |

| Performance (marketing / talks) | ~778 TFLOPS (aggregate) | ~3,086 TFLOPS (aggregate) | ~1 EFLOPS class (target narrative) |

| GPU RAM (as listed) | 64 GB | 384 GB | 25,920 GB (roadmap) |

| CPU / OS | 32-core AMD EPYC, Ubuntu 24.04 | 32-core AMD Genoa-class, Ubuntu 24.04 | Custom / scale-out (early) |

| Availability | In stock (as claimed) | In stock (as claimed) | Development phase |

Software thesis (recap): ElementwiseOps handle dense math across tensors; ReduceOps shrink tensors (SUM, MAX, …); MovementOps reshape and permute with ShapeTracker-style semantics so “virtual” moves avoid unnecessary physical copies when possible.

Commercial rules that matter: No customization SKUs; wire transfer only; San Diego fulfillment; alpha software expectations.

What to watch next

- Exabox — Whether Tinycorp can ship a credible exa-scale story without vaporware optics—MLPerf disclosures, power, cooling, and serviceability beat slide FLOPS.

- tinygrad alpha exit — The project’s bar (e.g. 2× vs PyTorch on selected paper reproductions on a single NVIDIA GPU, plus Apple-silicon work) decides if “minimal ops” wins outside niche kernels.

- Hiring and bounties — Velocity versus demand signals across software, ops, and hardware.

FAQ

Q What is Tinybox for?

A Purpose-built deep learning training and inference on local iron—marketed for teams that want offline capability without renting tokens.

Q How do you buy one?

A Ordering flows through Tinycorp’s site; wire transfer is the stated payment rail; shipping is typically quoted around one week post-payment, with pickup options in San Diego or worldwide shipping.

Q Can I customize a Tinybox?

A No—non-custom SKUs are part of the cost/quality posture.

Q What is tinygrad relative to Tinybox?

A Tinybox is hardware; tinygrad is the software stack optimized to run on it (and elsewhere). Same company, different SKU category.

Q Is tinygrad “production ready”?

A It remains alpha—usable for serious work in some domains, but expect breaking changes and rough edges compared to PyTorch’s maturity.

The SignalStack angle

What we are not doing: recommending Tinybox for every team. What we are doing: treating it as a coherent wager on local control—CapEx, transparency, and a compiler-first framework over cloud convenience.

1. Threat model drives the SKU

If data residency, reproducibility, or owning the training trail matters, local iron can be rational. If you need elastic burst and managed scale, it is the wrong tool.

2. FLOPS live in hardware and compiler contracts

The market needs more than one answer to “where do the FLOPS live?”—Tinybox is one narrative that treats that question as hardware plus software, not a billing SKU alone.

Disclaimer: Specs and pricing are vendor positioning; verify before purchase.