LiteLLM on PyPI: Backdoored Builds, Secret Harvest, and .pth Persistence

TLDR

SignalStack Tech Report · March 26, 2026 · Security / Supply Chain / Python

Why this is on SignalStack: we prioritize incidents where attack mechanics change what developers must verify—import-time execution, PyPI compromise, and host-level persistence—not only the headline package name.

LiteLLM—a widely used Python library for calling many LLM APIs from one interface—was compromised on PyPI when malicious package versions (1.82.7 and 1.82.8) shipped code that steals secrets and, in the later build, persists across Python startups via a .pth hook.

- Affected builds (reported): 1.82.7 and 1.82.8 on PyPI

- Risk: broad secret harvest (cloud, CI, k8s, keys, env) plus dropper / persistence behavior described in incident analyses

- Do now: pin or downgrade to a maintainer-confirmed clean release, rotate credentials, inspect hosts for persistence and lateral movement

Treat attribution (e.g., to named threat groups), infection counts, and geopolitical add-ons as provisional unless your own forensics confirm them.

What happened

On March 24, malicious LiteLLM builds appeared on PyPI. The project tracker and security advisories coalesced around versions 1.82.7 and 1.82.8 as the bad releases.Early public reports—including a researcher who reported severe disruption after pulling the package—helped surface the issue quickly. Community and vendor analysis described malicious logic embedded so it could run on import, with initial placement reported under paths such as litellm/proxy/proxyserver.py.

Version 1.82.8 was described as adding stronger persistence: a .pth file so malicious code could run whenever the Python interpreter started—even if the app never imported LiteLLM again.

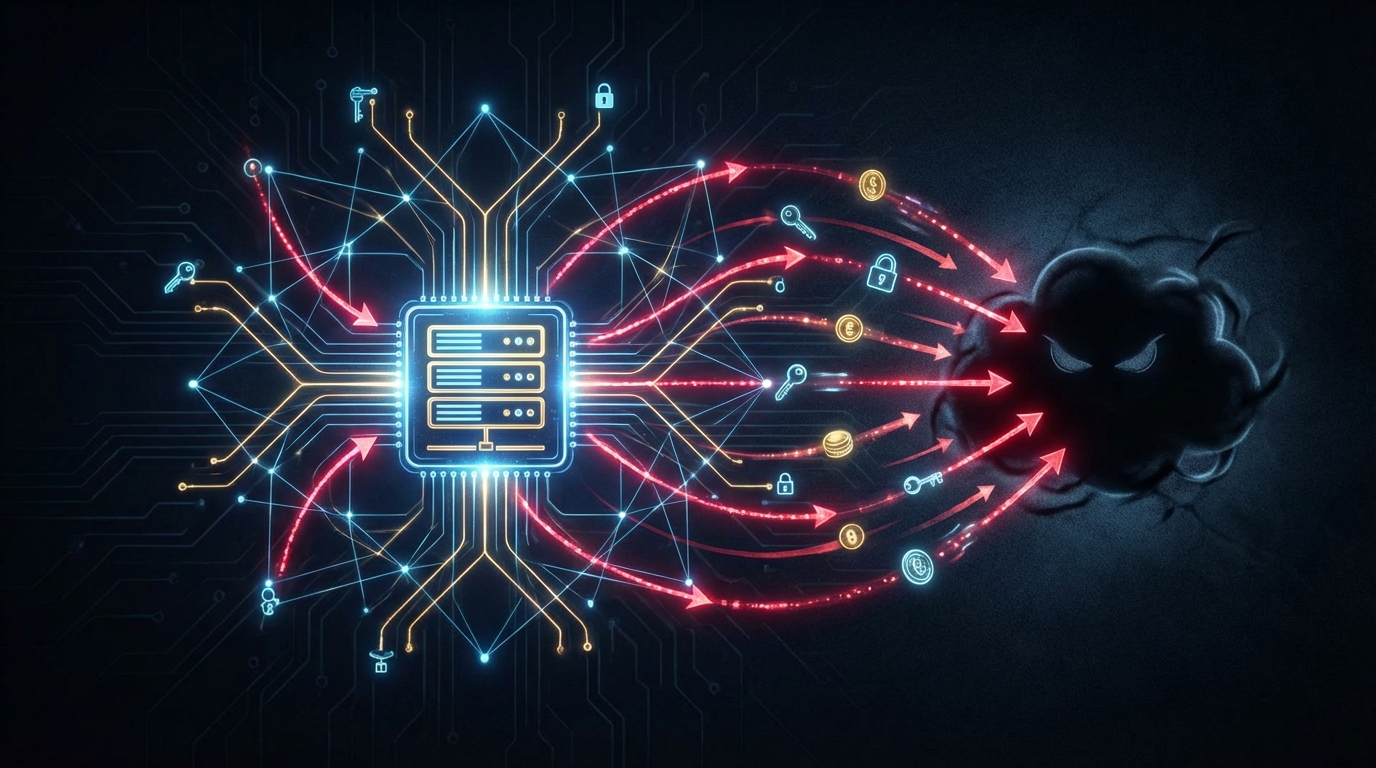

Incident responders labeled the payload as a credential stealer / dropper variant (names like “Cloud Stealer” appear in analyses) aimed at developer and cloud estates: SSH keys, cloud tokens (e.g. AWS, GCP, Azure), Kubernetes material, .env and environment variables, DB and TLS keys, CI/CD secrets, and crypto wallet data. Some descriptions also mention downloaded follow-on payloads and Kubernetes-oriented behavior; treat operational detail as variable by environment, not uniform across every install.

PyPI subsequently removed the malicious releases; maintainer guidance pointed to 1.82.6 (or another maintainer-confirmed clean line) as the latest safe version at the time of remediation—verify against upstream release notes before pinning.

Commentary also ties the episode to a broader pattern of supply-chain hits on dev tooling (other ecosystems and products get named in reports); overlap with prior incidents is often framed as a reminder to rotate credentials completely after any suspected compromise.

Why it matters

LiteLLM sits on a high-value path: it often wraps API keys, routing config, and environment secrets for AI workloads. One bad `pip install` can exfiltrate the keys to the kingdom without touching your model vendor directly.This case is a clean example of why supply-chain risk scales: popular abstraction layers for AI are as attractive as core cloud SDKs—sometimes more, because they aggregate many providers behind one dependency.

It also shows how persistence tricks (here, .pth) turn a “library bug” into a host-level incident that outlives uninstall if you do not hunt artifacts.

Unconfirmed metrics (e.g., very large exfiltration counts) sometimes appear in informal reports; use them for severity intuition, not audit-grade claims, unless independently validated.

Key details at a glance

- Package / registry: LiteLLM on PyPI

- Versions implicated: 1.82.7, 1.82.8 (malicious); confirm against maintainer advisories

- Execution: malicious code on import; 1.82.8 adds

.pth-based persistence per incident analyses - Data targets (as described in analyses): SSH, cloud tokens, k8s secrets, env files, DB/TLS material, CI/CD secrets, wallets

- Remediation status: malicious versions removed from PyPI; use maintainer-stated clean version + lockfiles

- Attribution: threat actor names (e.g. TeamPCP) appear in some analyses—separate from PyPI’s mechanical takedown facts

Some reports mention hundreds of thousands of exfiltration events or related scale metrics; treat as unverified unless your SOC or vendor confirms.

If your org touched the bad builds, assume secrets are burned until rotated: API keys, cloud IAM, CI tokens, kube credentials, SSH, database passwords, signing keys.

What to watch next

- BerriAI postmortem — Exact version matrix, IOC lists, and hardening (yanking alone does not fix already-installed wheels).

- Reproducible installs — Hash pinning, private mirrors, import-time monitoring for critical AI stacks.

- Credential lifecycle — Atomic rotation after any supply-chain scare—partial rotation is how cascade stories repeat.

- Cross-ecosystem IOCs — Map overlaps from PyPI / npm campaigns to your SBOM.

- Downstream risk — Customer and regulatory questions if AI pipelines had broad API access from compromised dev laptops or CI.

The SignalStack angle

What we are not doing: treating this as a “Python-only” footnote. What we are doing: naming repeatable controls—verify releases against trusted sources, hunt .pth and other persistence, rotate like the keys already left the building.

1. AI abstraction layers are high-value targets

LiteLLM sits where API keys and environment configuration converge. SignalStack’s read: the same dependency graph that speeds shipping concentrates secrets for an attacker.

2. Persistence turns a library incident into a host incident

Uninstall alone may not remove .pth artifacts. Closing metric: time-to-identify clean interpreter state on every affected runner.

Disclaimer: Verify versions and remediation steps against primary maintainer and registry advisories.

FAQ

Q What is LiteLLM?A A popular Python library that unifies calls to many LLM providers behind one API—so it often sees API keys and environment configuration.

Q Which PyPI versions were affected?

A Published reports and the maintainer issue thread center on 1.82.7 and 1.82.8. Confirm with the official issue / release notes on the repo you trust.

Q What should I do if I installed those versions?

A Remove the bad build, install a maintainer-confirmed clean version, rotate all potentially exposed secrets, audit for .pth and other persistence, and rebuild sensitive hosts if you cannot prove cleanliness.

Q Was this limited to pip install on a laptop?

A Risk extends to CI, containers, and shared runners that installed the package—anything that executed the code.

Q How do I verify scale or attribution claims?

A Prefer primary sources (maintainer postmortem, PyPI metadata, your own logs) over headline numbers from social or unnamed estimates.